Feedback Debt and How to Spot Duplicate Requests Across Support, Sales, and Forums

Jamie

Feedback debt is a real product risk

Most teams know what technical debt looks like. Feedback debt is quieter: it’s the growing backlog of unlinked, duplicated, and context-less customer requests spread across inboxes, ticketing tools, call recordings, and community threads. The cost isn’t just messiness. It’s misprioritization (building for the loudest channel), slow decisions (re-litigating the same request), and weak communication (no single place to close the loop).

The underlying pattern is predictable: the same need gets repeated in different words, by different personas, in different systems. Detecting those duplicates early—and turning them into one actionable insight—is the fastest way to reduce noise and improve roadmap confidence.

Where duplicate requests hide and why they multiply

Duplicates rarely look like carbon copies. They show up as:

- Support tickets describing symptoms (“Export times out”) rather than intent (“Need data offline”).

- Sales calls framed as deal blockers (“Security review fails without SSO”).

- Forums and communities phrased as feature ideas (“Add workspace templates”).

- Internal Slack/Teams messages paraphrasing customer quotes or escalations.

They multiply because each team documents feedback in its own language, at its own level of detail. Support tends to capture steps and urgency; sales captures revenue impact and timing; forums capture peer validation and edge cases. If you don’t unify those perspectives, you end up with three “different” requests that are actually the same underlying job.

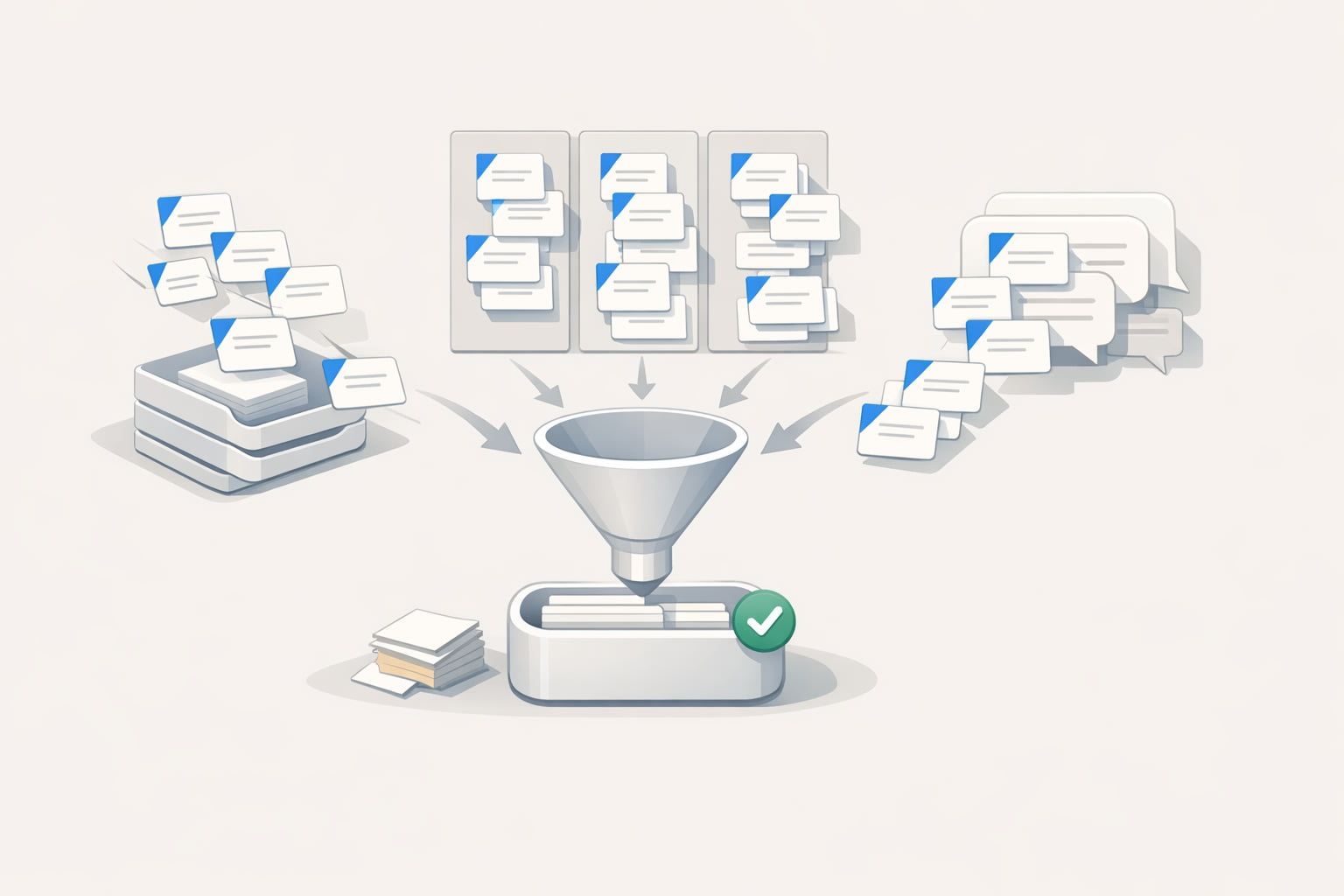

How to detect duplicates across channels without drowning in process

1) Normalize the input before you try to deduplicate

Deduplication fails when you try to compare raw text from different sources. Instead, standardize each item into a small set of fields that make matching possible:

- Problem statement (one sentence, user-facing)

- Desired outcome (what success looks like)

- Context (persona, environment, workflow)

- Evidence (links to ticket, call timestamp, forum thread)

This “compression” step is also where teams often rediscover that multiple requests are the same job with different surface details. If you already use Jobs-to-Be-Done language, you can make this faster by mapping feedback into outcomes and context. (If you want a lightweight method for that, this guide on turning raw interview notes into a JTBD journey map is a practical companion.)

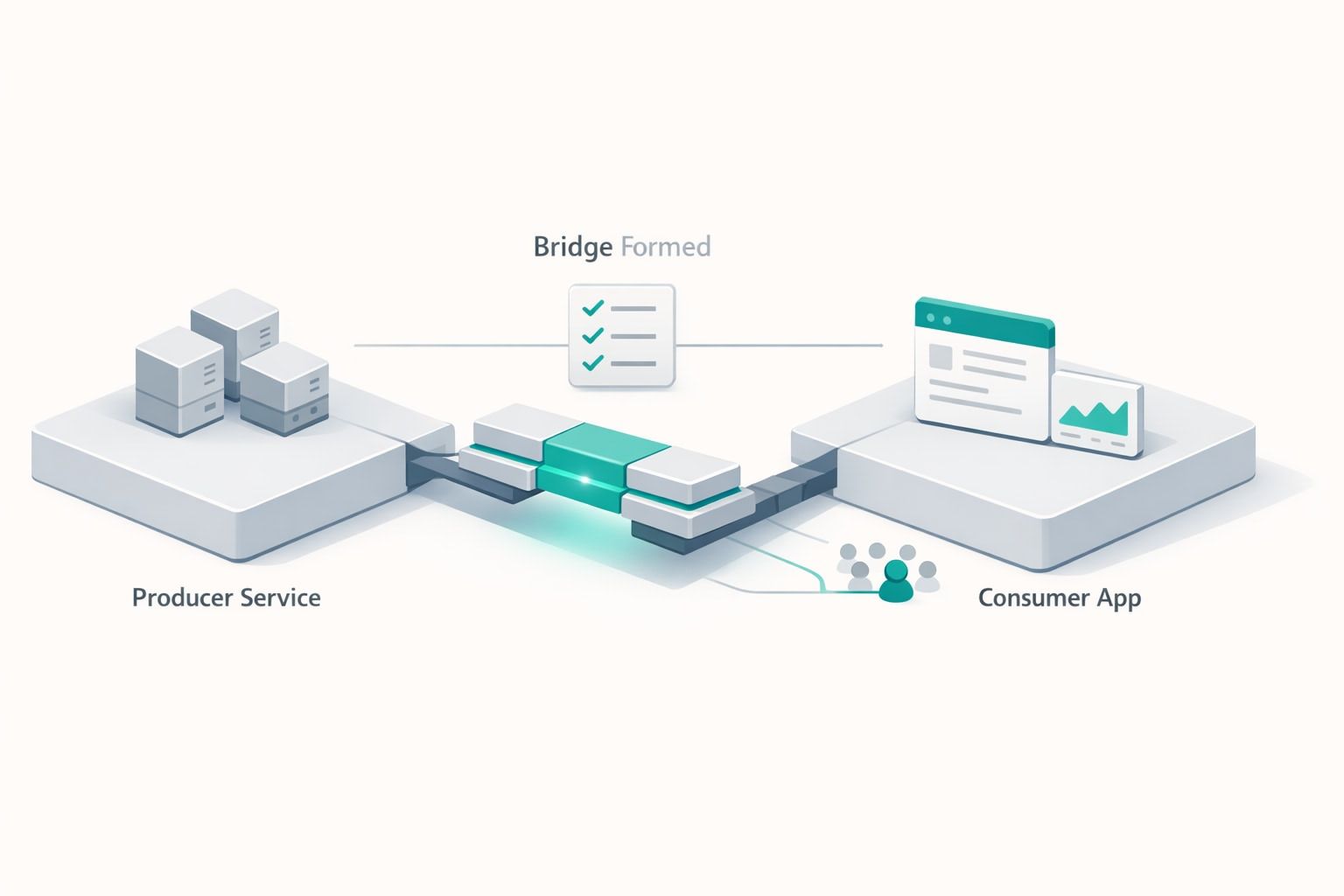

2) Create a canonical request record and attach everything to it

A duplicate becomes harmless when you have a “home” for it. The goal is one canonical record per underlying need, with every related piece of feedback attached as supporting evidence. That canonical record should answer:

- What is the request, in plain language?

- Who is asking (segments, plans, ARR exposure)?

- How often does it come up and where?

- What are the top variations (workarounds, constraints, edge cases)?

This is where a centralized feedback system matters more than spreadsheets. In canny.io, teams typically keep a single post per request and attach feedback from multiple sources, so the “duplicate” increases signal instead of increasing clutter.

3) Use clustering rules that match how people actually talk

Across support, sales, and forums, duplicates rarely share the same keywords. So rely on matching strategies that go beyond exact phrasing:

- Intent-based matching: group by outcome (“share externally,” “export,” “audit access”).

- Entity matching: detect shared objects (SSO, SCIM, API keys, roles, workspaces).

- Constraint matching: recognize recurring blockers (compliance, scale limits, permissions).

- Workflow matching: identify repeated steps in a process (onboarding, reporting, approvals).

You can implement these as lightweight tagging conventions, or automate them with AI-assisted grouping. Canny’s Autopilot is designed specifically for capturing feedback from tools like Zendesk, Intercom, Gong, and Zoom and deduplicating it, which is useful when the volume is high and the wording is inconsistent.

4) Confirm duplicates with “difference checks,” not debate

When someone claims, “This is a different request,” it often is—just not for the reason they think. Instead of debating, run a quick difference check:

- Different persona? Same need, different priority or constraints.

- Different environment? Cloud vs. on-prem, EU vs. US, browser vs. mobile.

- Different trigger? Same outcome, different moment in the journey.

- Different success metric? Same feature area, different definition of “done.”

If the differences change the solution materially, keep separate records but link them as related. If not, merge and preserve nuance in comments or structured fields.

Turning duplicates into one actionable insight

Deduplication is only useful if it produces an insight you can act on. The practical output is a single, decision-ready brief attached to the canonical request:

- Demand: total count, trend over time, and top channels (support vs. sales vs. forum).

- Segment impact: which customer tiers, industries, and roles care most.

- Revenue exposure: pipeline at risk, expansion potential, renewal risk (when available).

- Urgency signals: frequency spikes, escalations, workarounds, churn mentions.

- Suggested next action: validate, prototype, schedule discovery, or commit to roadmap.

In other words, duplicates become a multiplier for confidence: you’re not just counting requests; you’re learning who needs what, in what context, and what it’s worth.

Operational guardrails that prevent feedback debt from returning

Make capture automatic and routing predictable

Manual copy/paste is where feedback debt begins. Set up a default path for each channel: support tickets flow in with key metadata, sales calls create summarized snippets with timestamps, and forum posts get ingested with engagement signals. Multi-tenant or multi-workspace orgs should also ensure access and secrets are handled cleanly when automations touch customer data; this article on designing multi-tenant automations with per-tenant secrets and RBAC is a good reference point.

Define “merge criteria” and enforce it lightly

Teams move faster when “what counts as the same request” is explicit. A simple rule works: if the desired outcome is the same and the solution path is likely the same, merge; otherwise link as related. Keep it lightweight and written down.

Close the loop from the canonical record

Closing the loop is harder when feedback is fragmented. When a decision is made—research, planned, shipped—update the canonical request and notify everyone attached to it. That’s how you turn a messy stream of duplicates into one clear narrative for customers and internal teams.

What “good” looks like after deduplication

You’ll know you’re reducing feedback debt when product decisions reference a single source of truth, support stops re-triaging the same issue as “new,” sales can point to a live status for key requests, and community threads converge into one place with consistent answers. Duplicate requests don’t disappear—but they stop wasting time and start increasing clarity.